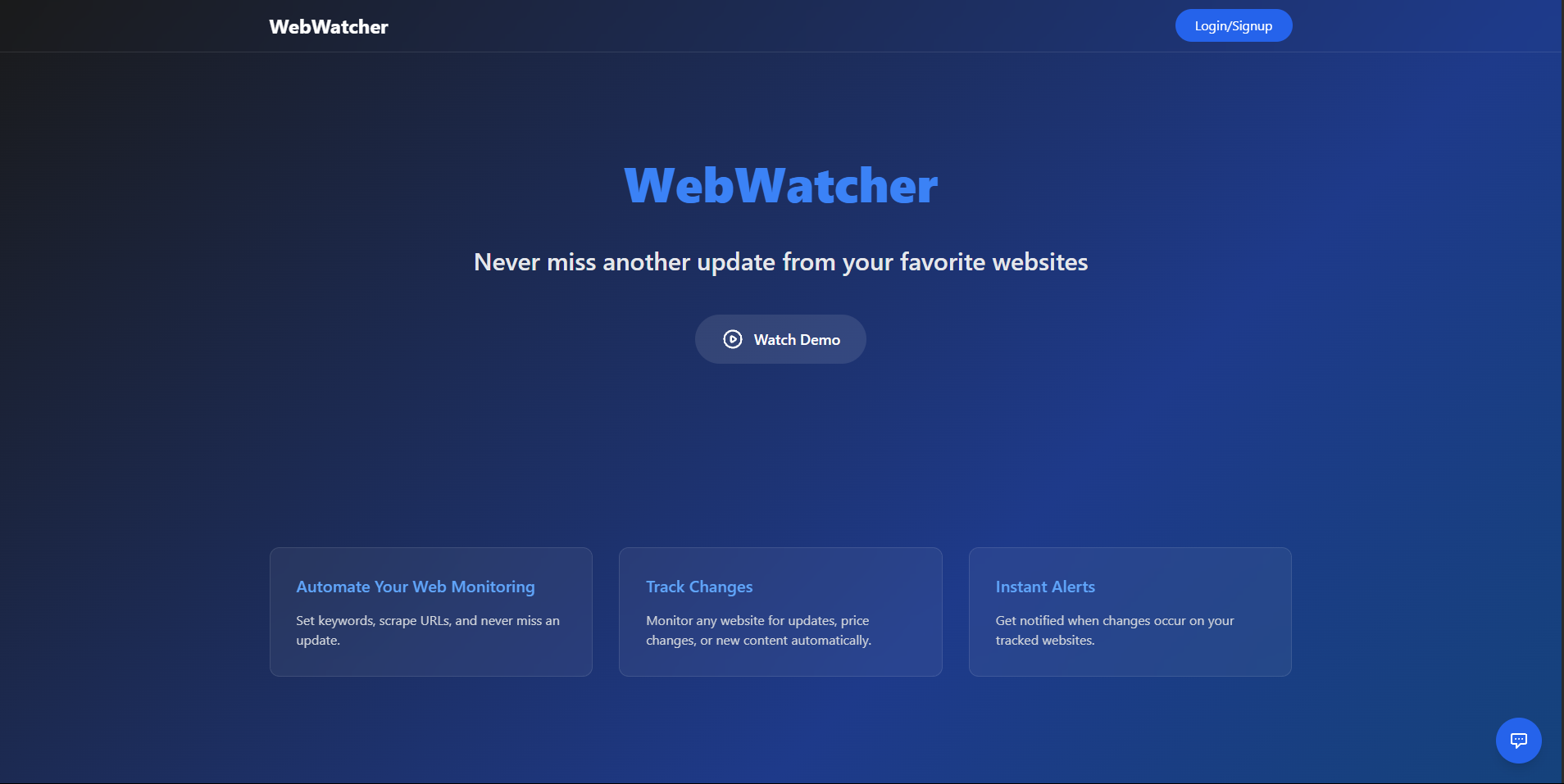

Project Overview

WebWatcher is a full stack web application that automatically monitors websites for specific keywords and notifies users when matches are found. Originally conceived during my job search to track company career pages, it evolved into a versatile platform for monitoring any content changes across the web from job listings to product availability, news articles, and more.

Key Features

User Management

- Secure Authentication: Complete sign up/login system using JWT and bcrypt for password hashing

- User specific Watchlists: Each user maintains their own personalized monitoring setup

Monitoring Capabilities

- Custom Keyword Tracking: Users can define up to 10 keywords they want to monitor

- Multiple URL Support: Up to 10 URLs can be tracked simultaneously

- Intelligent Scraping: System parses website content looking for keyword matches

Scanning Options

- Manual Scanning: Users can trigger on demand scans (limited to 4 per day)

- Automated Monitoring: Background scans run every 6 hours

- Real time Results: Match details displayed in the user dashboard

Notifications & Alerts

- Email Notifications: Consolidated alerts sent when keywords are found on monitored sites

- Customizable Alerts: Users can add or remove email notifications based on preference

- Match Management: Users can review and delete previous matches from their dashboard

User Experience

- Intuitive Dashboard: Clean interface with separate sections for watchlist management and results

- Feedback System: Built in mechanism for users to submit feedback to improve the application

Technical Implementation

Backend Architecture

- Node.js & Express: RESTful API handling user authentication, watchlist management, and scraping functions

- MongoDB with Mongoose: Database design with schemas for users, watchlists, match results, and scan limits

- Web Scraping: Cheerio for fast HTML parsing with intelligent error handling

- JWT Authentication: Secure token based user authentication with middleware protection

- Scheduled Tasks: Node cron for automated periodic scanning

Smart Features

- Daily Scan Limits: Logic to prevent excessive use while providing valuable free functionality

- Email Deliverability: Properly configured HTML emails with headers to prevent content from being treated as quoted text

- Error Handling: Robust error management for URL processing, scraping, and API requests

Deployment & Monitoring

- Environment Configuration: Dotenv for secure management of sensitive credentials

- Scalable Architecture: Code structured for easy maintenance and future feature additions

Challenges Overcome

One of the major technical challenges was managing the scraping process efficiently. I implemented several solutions:

- Rate Limiting: Created a daily scan count system to prevent excessive server loads

- Error Resilience: Added robust error handling for network issues and malformed URLs

- Notification Management: Developed a consolidated email system that groups matches rather than sending multiple alerts

- Processing Logic: Designed the scraper to handle various URL formats and invalid inputs

Key Learnings

- Gained practical experience building a complete RESTful API with authentication

- Developed deeper understanding of scheduled tasks and background processes in Node.js

- Implemented best practices for email deliverability and notification systems

- Created a MongoDB schema design that efficiently supports user specific data and monitoring

This project demonstrates my ability to build practical, user focused web applications that solve real world problems while incorporating security best practices and efficient data processing.